Cognitive Services gets better at handling rich information

Blog|by Mary Branscombe|14 May 2019

The Azure Cognitive Services APIs and SDKs are a straightforward way to use pre-trained but often customisable machine learning models for everything from image recognition to speech synthesis. Developers can add them to a website or an app or call them as part of a distributed machine learning pipeline in Spark ML, making them very flexible components whatever your level of data science expertise. At the Build 2019 Conference, Microsoft announced that over 1.3 million developers are already using Cognitive Services.

Four existing services came out of preview at Build 2019: Neural Text-To-Speech for generating voices lifelike enough to use for large amounts of text like reading an audiobook; Computer Vision Read for recognising text and handwriting in images; Named Entity Recognition in Text Analytics for finding the names of people, places, products and organisations mentioned in text; and Cognitive Search in Azure Search, for exploring and finding insights in large and complex sets of unstructured and semi-structured content like images and transcripts.

The QnA Maker tool for turning FAQs into an interactive chatbot can now handle multi-turn dialogs and the Language Understanding Service, LUIS, can extract multiple intents from a long sentence, so if a user types, say, “send a large pepperoni pizza over to my home after 9pm this evening, but make sure it is a deep dish with the new spicy pepperoni,” LUIS can more easily translate what they want into the right pizza order.

Microsoft also added a new category of services, Decision (in addition to Vision, Speech, Language and Search). Decision includes the existing Content Moderator tool for reviewing text and images that users contribute that might not be the kind of content you want to include (whether it’s offensive, age-inappropriate or about your competitors) and the recently released Anomaly Detector service.

You can run anomaly detection to monitor time series data looking for values that aren’t what they should be, in the cloud if you’re monitoring a website or online business process for fraud or worrying trends, or locally in a Docker container. That’s ideal if you want to check whether a really unusual figure is an actual disaster like fire or flood, or if the sensor on an IoT device might be failing.

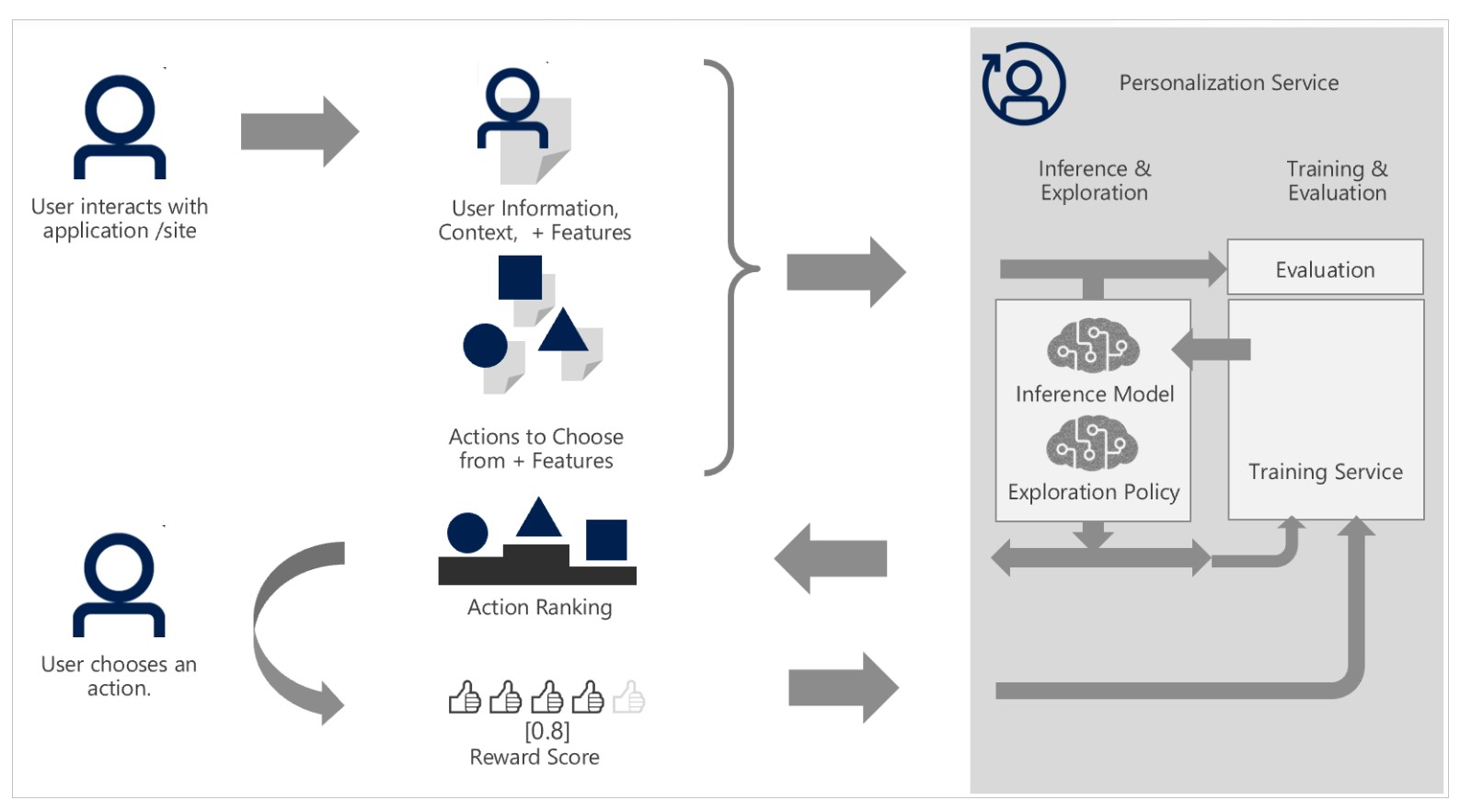

There’s also a brand new service in preview, Personalizer – for picking the best thing to show to users based on their behaviour in real time, whether that’s ads, shopping recommends, news headlines, filters to apply to a photo, the next best action to complete a business process, or the best answer for a chatbot to give. You give the Rank API a list of the possible content your app could show and information about your users; the API can rank the actions with its current model or explore new choices that might change the model. Rank returns a single action that your app shows to users. Based on whether they click the link, buy the product or choose a completely different photo filter, you return a score with the Reward API that’s used to update the model.

How the Rank and Reward APIs work in the new Cognitive Services Personalizer

Personalizer uses reinforcement learning, a relatively new and advanced machine learning technique that technology companies like Google have been using internally. Microsoft may be the first to offer it as a commercial service for developers to take advantage of (the similar Custom Decision Service has been available as an experimental service through Cognitive Services Labs).

Another of the Cognitive Services Labs experiments has also graduated to a full service. Ink Recognizer converts handwriting into text and drawn shapes into smooth polygons; developers can use it in an app to recognise handwriting, tidy up drawings so they’re easier to understand, make handwritten lists line up neatly on the page, make ink searchable without converting it to text, or making it easy to fill out forms with fields – even down to only recognising digits when someone is writing in a file that’s supposed to be for phone numbers. Because the digital ink is stored as JSON, ink recognition works on mobile and in web apps as well as in Windows apps (this is the same ink recognition that’s been in PowerPoint since 2018), and it’s available for 63 languages and locales initially.

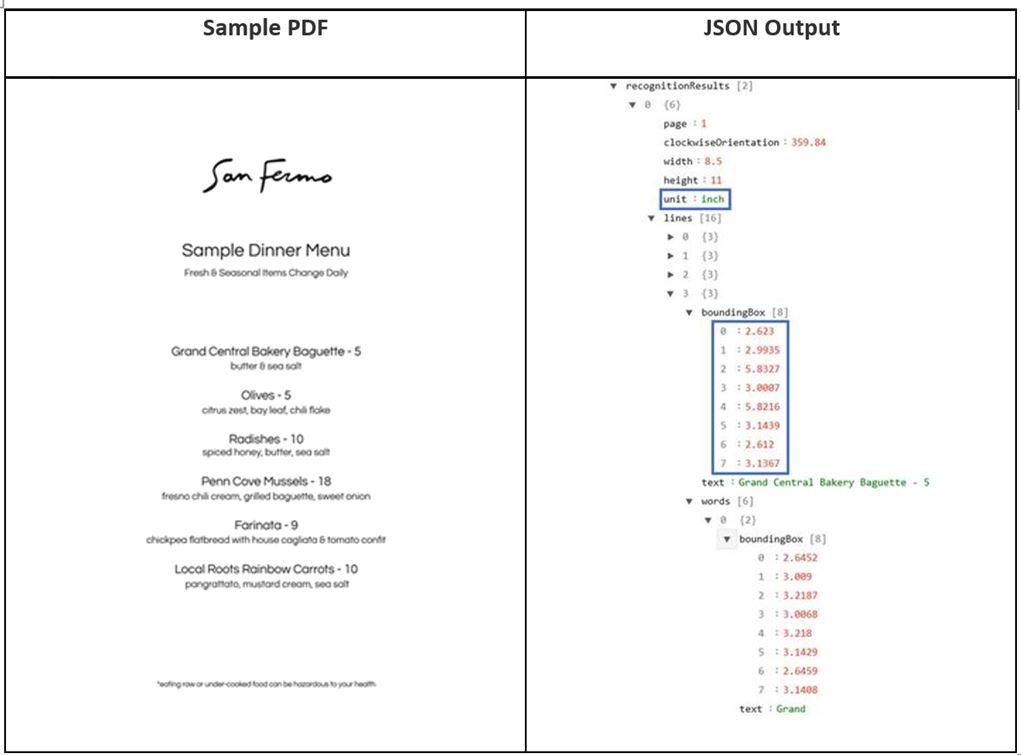

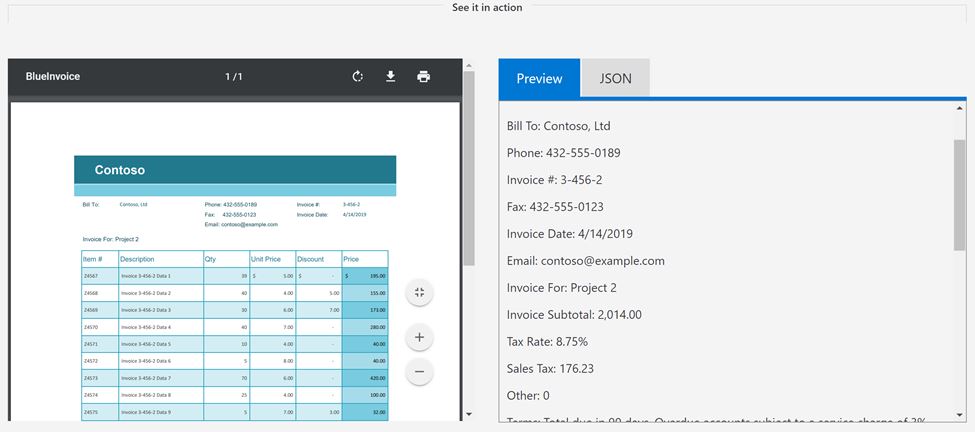

If you’re dealing with forms that have already been filled out on paper and then scanned (something that many organisations have in their archives), the new Form Recognizer API can extract text from fields and tables in documents (stored as PDFs, PNG or JPEG) and store them as key value pairs to turn them into structured data. Because most organisations have their own specific form layouts, you can use sample documents (as few as five) to customise the image recognition model. Because it’s a REST API you can then pipe those recognised forms into existing search indexes and business workflows. (For less structured document layouts like a menu or the order of service for a wedding, try the Computer Vision Read API instead.)

The Computer Vision Read API can extract information from documents

that aren’t laid out like a traditional form

And if you’re dealing with forms that have sensitive or regulated data that you might have issues taking to the cloud, you can run that trained model locally in a container. Initially it’s only available for English, and in the West US and West Europe Azure regions, but more languages and regions will be supported soon.

Recognising a table of information on a form

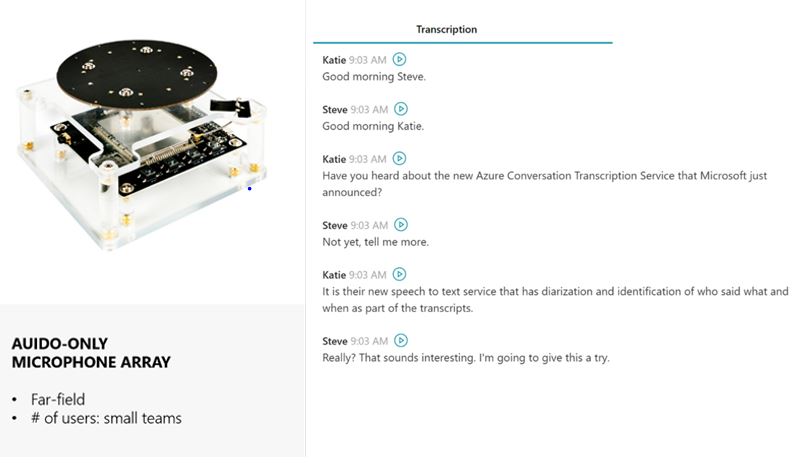

Organisations using Teams and Azure Streams can already get transcripts of meetings and videos. That same transcription of meetings and conversations is now available as a real-time Conversation Transcription feature in Speech Services. Initially it needs a circular seven microphone array like the one Microsoft used to demo meeting transcription at Build in 2018 (which is available as a development kit as part of the Microsoft Speech Services SDK) and is suitable for small groups of people (especially as you need to train it with sample recordings for each person speaking and create user profiles for them). You can give the service multiple custom vocabularies, so it can more accurately recognise words from your industry and your company – and teaching it about, say, health terms won’t compromise its accuracy recognising terms from the transport industry if what your business does is transport pharmaceuticals.

The Conversation Transcription Service in action and the

seven microphone array it needs to work

Microsoft is planning to expand conversation transcription to larger groups, and to take advantage of existing microphones in a video conferencing-equipped room. And a demo at Build 2019 showed it working with the microphones on the laptops and phones of meeting attendees and turning those into an array microphone, making it easier to work out who is speaking and putting the right name on their portion of the conversation. This is one of the hardest problems in speech recognition, so those developments may take a little time, but the state of the art here is moving very rapidly.

The members of the Grey Matter Managed Services team are experts at understanding and managing Azure-related projects. If you need their technical advice or wish to discuss Azure and Visual Studio options and costs, call them on 01364 654100 or complete the form below.

Contact Grey Matter

If you have any questions or want some extra information, complete the form below and one of the team will be in touch ASAP. If you have a specific use case, please let us know and we'll help you find the right solution faster.

By submitting this form you are agreeing to our Privacy Policy and Website Terms of Use.

Mary Branscombe

Mary Branscombe is a freelance tech journalist. Mary has been a technology writer for nearly two decades, covering everything from early versions of Windows and Office to the first smartphones, the arrival of the web and most things in between.

Related News

Intel oneAPI 2024.1 A Milestone Release

What’s new in Intel oneAPI 2024.1 The 2024.1 release of Intel® Software Development Tools marks a major milestone for developers AND the entire software industry: the Intel® oneAPI DPC++/C++ Compiler has become the first compiler to fully support the SYCL...

ISV Partner Day Shortlisted for CRN Sales & Marketing Award

ISV Partner Day has been shortlisted for "Best Customer Event" at the CRN Sales & Marketing Awards

Microsoft 365 and Azure Security Tools: Microsoft Intune

In the second video in our series of short videos discussing Microsoft 365 and Azure security tools and concepts, our Microsoft experts cover off all you need to know about Microsoft Intune! Intune is a robust cloud-based solution to safeguard...

Women in Tech: A New Era | Roundtable

Fri 21 June 2024 5:00 pm - 11:30 pm BST

Get ready to shake it off (and network like nobody’s watching) because we’re hosting an exciting exclusive Women in Tech event with ESET that you won’t want to miss out on. Join us and share feedback, experiences and insights with...