How can Power BI and Azure enterprise analytics help you better understand your business?

Blog|by Mary Branscombe|11 July 2019

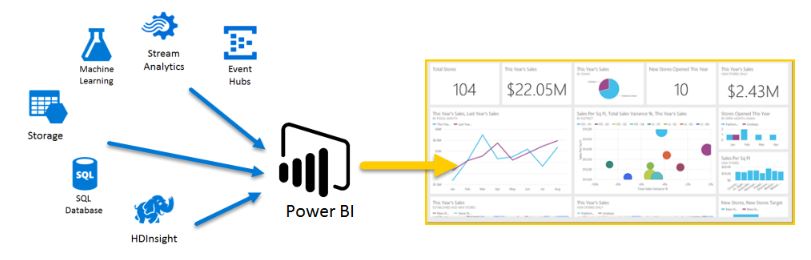

As more and more data moves into the cloud, and businesses have more and more data sources to get insight from, first business intelligence and now enterprise analytics have been moving into the cloud as well. Where you would once have used Excel, Power Query and SQL Server Analysis Services, now you’d pick Power BI and the Azure data services to create the analytics solution you need.

“The secret to making sense of the different Azure data options that you could use here is to think of it as a stack”, explains Arun Ulag, who runs Microsoft’s Business Intelligence product portfolio.

“We generally recommend that customers bring all their data into a data lake, like Azure Data Lake gen 2. Then you have a preparation layer where you create data marts and data warehouses, where you use Azure SQL Data Warehouse which allows you to create data marts that are focused on a specific business use case. And then the tier above that is where you create your semantic model layer: semantic models are where you define your business logic, your KPIs, your measures, your calculations and so on. That semantic model is something that was typically done in products like SQL Server Analysis Services, or Azure Analysis Services, which are also built by my team, and now are directly integrated into Power BI. And then the layer above is our visualisation layer, which is Power BI.”

Microsoft, for example, has a data model for its worldwide financial analytics which tracks products and revenue by customer segment and geography. The data lives in a KPI data lake with some 20 billion rows of data drawn from 60 different data sources, with about 2,200 KPIs. “What’s our revenue, what’s a year over year growth, what’s available to budget, how do we do currency translations…” About 15,000 Microsoft employees worldwide access the KPI lake, usually through Excel or Power BI.

The same people won’t be involved in all those layers, he points out. “The business analysts aren’t programmers, they’re folks with advanced Excel skills and they’re usually familiar with one or more BI tools as well. They create the reports, the dashboards and analytics that enable the business users to focus on what matters most to them. They would use Power BI, and the fact that shares a lot of the same foundations as Excel in terms of the way you get data, the formula language, that makes them very productive.”

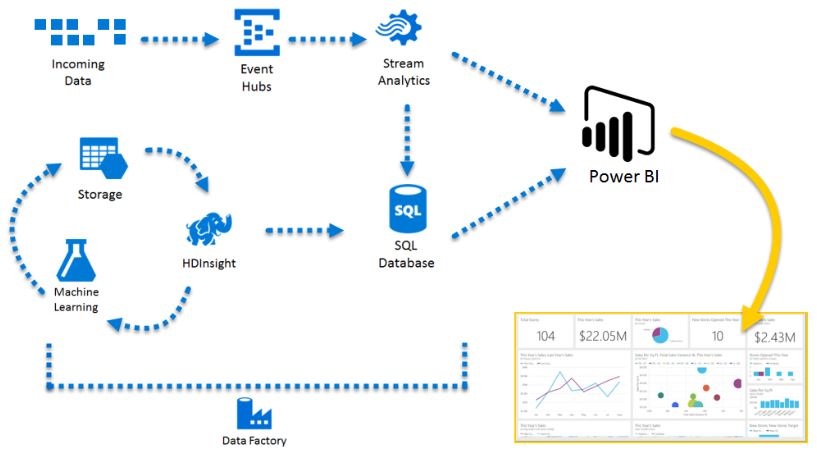

There are some self-service options in Power BI for them to access data from Azure, but the ‘heavy lifting’ will be done by data developers. “They would be using Azure Data Factory [for ETL] and connecting to different data sources and bring them into Azure Data Lake, they would be preparing the data and transforming it and loading it into Azure Data Warehouse. Then the data scientists that build the machine learning models, that do the predictive analytics, use tools like Azure Machine Learning, or R or Python.”

Medical supplier Coloplast switched from spreadsheets and PDFs for reporting by using Azure Data Factory to extract data that it stores and models in Azure SQL Database, processes in Azure Analysis Services and visualises in Power BI – but for advanced analytics and the machine learning it wants to move on to, the data is staged into Azure Data Lake and moves on to Azure SQL Data Warehouse (still connecting to Power BI for self-service data analytics). Newell Brands – the company behind Parker pens, Yankee Candle and Rubbermaid – has built a similar data platform, storing over 100 terabytes of data in Azure Data Lake (pulled in by Azure Data Factory from ERP, POS and CRM systems). Key data is extracted into Azure SQL Data Warehouse, used to create data models in Azure Analysis Services and visualised in Power BI and Excel.

If you’re looking for a SaaS approach instead, Adobe Analytics Cloud already works with Power BI and Adobe plans to integrate with Azure Data Lake and Data Factory in future services, to help customers ingest, clean and analyse data at scale.

Seamless services

These different services will be used by people in different roles, but the aim is to make this seamless for customers, with features like the Common Data Model. “They don’t have to do all the heavy lifting to wire all the services together, to do the system integration. When you bring data to Azure Data Lake we also bring the semantics around the data as well: the entities, the relationships, what the different fields mean – which one is an address field, which one’s a phone number, which one might be highly confidential information, such as salaries and social security numbers.”

IT can certify those semantic models and data sets so that business analysts know they’re approved; but analysts can also publish and promote their own data set as a semantic model and if that gets a lot of usage IT can pick it up and certify it. “We can enable business to forge ahead, but IT can engage and standardise and certify.” They can also configure Power BI to automatically copy data brought into Power BI into their Azure Data Lake, along with the metadata. “So if I choose to run Spark against the data, I can do that; if I want to build a machine learning model using Python, and I want to use the same data, I can do that. The business analysts won’t notice that anything has changed, but the data developers and IT now have full visibility and governance and control over the data in the data lake.”

Similarly, data scientists can build machine learning models in the Azure Machine Learning service and share them with business analysts by sending email or creating a distribution list. “Underneath the hood, we discover which machine learning models have been shared with the business analysts, and we build the user experience for that business analyst directly in Power BI.” Analysts can also use the Auto machine learning feature which was originally built for Azure Machine Learning “but Power BI shamelessly lifted it and made it available to business analysts,” he jokes.

Dutch fashion retailer Shoeby is using that for inventory management. “If they had to work with a data scientist to build a machine learning model for them, that would have taken months and been very expensive. Instead, they used Power BI with automated machine learning models to get a crystal ball to understand and predict which products are likely to be needed in which stores.”

Some customers have asked to be able to pass those Auto machine learning models on to data scientists: “If this is something that has become mission critical for the enterprise, they want to crack it open and tweak it and make some changes and validate it, and then be able to potentially share that back.” That’s not something Microsoft had anticipated but the option is now in preview.

For extremely large data sets, like the billions of rows of data from the SCADA systems that operate 6,000 miles of pipes and 1,000 pumping stations in Miami-Dade county (and going back 22 years), a new aggregation feature in Power BI makes it more efficient for pulling data from Azure SQL Data Warehouse as needed. The Power BI engine is actually the Analysis Services tabular engine, which uses the VertiPaq in-memory columnstore technology, so it’s very efficient at handling large amounts of data. That covers the majority of queries, Ulag says.

“When you’re looking at your data, and you’re slicing and dicing it, you’re typically looking at information in an aggregate way. You’re looking at what happened on this day, what happened in this geography. But if you wanted to go drilling into the fine detail of what happened to a particular sensor on a particular date at a particular time, Power BI transparently delegates the query to Azure SQL Data Warehouse and the data warehouse provides that fine granular detail. That’s a powerful pattern, because if you try to push all the end user queries to a data warehouse, typically most data warehouses are very good at serving fine levels of detail but they’re not meant to handle tens of thousands of sub queries in parallel. They’re not great at handling thousands of users who are trying to do interactive analysis and more large volumes of data.”

Put the two together and analysts can work with billions or trillions of rows of data through Power BI where data visualisation can help them find the right questions to ask, and because it’s connected to Azure data services they can handle the scale and make the same data available for other uses as your business develops more data science skills.

Grey Matter has a team of Power BI specialists and Azure Architects. Contact them on +44 (0)1364 654200 to discuss your use case.

Contact Grey Matter

If you have any questions or want some extra information, complete the form below and one of the team will be in touch ASAP. If you have a specific use case, please let us know and we'll help you find the right solution faster.

By submitting this form you are agreeing to our Privacy Policy and Website Terms of Use.

Mary Branscombe

Mary Branscombe is a freelance tech journalist. Mary has been a technology writer for nearly two decades, covering everything from early versions of Windows and Office to the first smartphones, the arrival of the web and most things in between.

Related News

Intel oneAPI 2024.1 A Milestone Release

What’s new in Intel oneAPI 2024.1 The 2024.1 release of Intel® Software Development Tools marks a major milestone for developers AND the entire software industry: the Intel® oneAPI DPC++/C++ Compiler has become the first compiler to fully support the SYCL...

ISV Partner Day Shortlisted for CRN Sales & Marketing Award

ISV Partner Day has been shortlisted for "Best Customer Event" at the CRN Sales & Marketing Awards

Microsoft 365 and Azure Security Tools: Microsoft Intune

In the second video in our series of short videos discussing Microsoft 365 and Azure security tools and concepts, our Microsoft experts cover off all you need to know about Microsoft Intune! Intune is a robust cloud-based solution to safeguard...

Women in Tech: A New Era | Roundtable

Fri 21 June 2024 5:00 pm - 11:30 pm BST

Get ready to shake it off (and network like nobody’s watching) because we’re hosting an exciting exclusive Women in Tech event with ESET that you won’t want to miss out on. Join us and share feedback, experiences and insights with...