Microsoft Cognitive Services: an introduction

Blog|by Jamie Maguire|18 June 2018

Artificial intelligence has been advancing at such a pace recently it can be hard to keep up at times, it’s been a disruptive force and is powering innovative solutions and helping drive digital transformation programmes around the world.

Microsoft and other players such as IBM with Watson, are effectively democratising decades of complex machine learning and artificial intelligence research and making it available to the market, at scale, in the form of cloud hosted APIs.

Developers, data scientists, and the tech community can now leverage artificial intelligence with relative ease and build innovative solutions that can help augment human capability.

In this blog post, the first in a four-part series, we introduce Microsoft Cognitive Services and how the platform lets you harness the power of artificial intelligence; we also touch on some of the more recent updates that were announced during Build 2018.

Vision

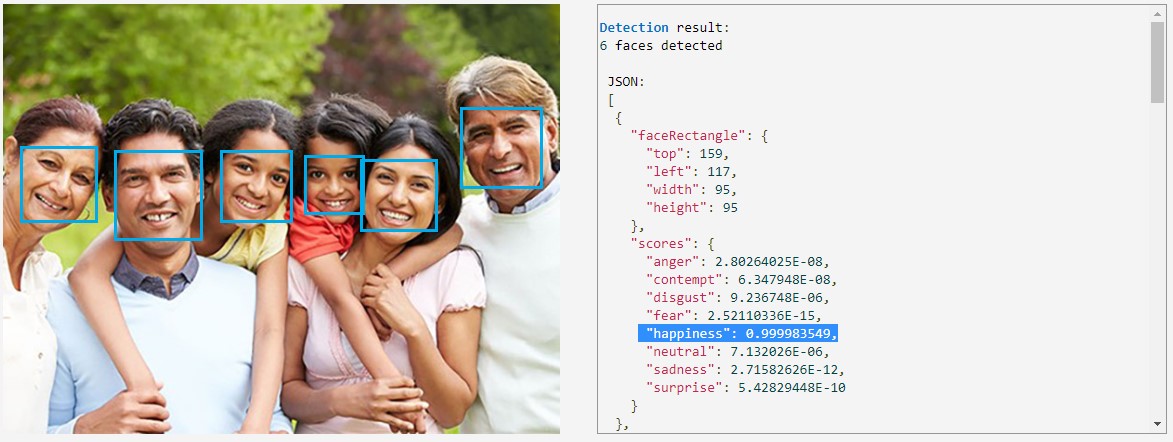

The Cognitive Services platform is split into 5 high level areas, first up is Vision. With the Vision APIs, the technology allows you to build applications that can identify and analyse video and image content. Some of the functionality includes, but is not limited to:

- Image classification

- Face detection

- Person identification

- Emotion detection in an image

- Identifying offensive content

- Handwriting recognition

For example, you can see the Emotion Recognition API in action here, it has identified 6 faces and determined with 99% confidence, that happiness is being expressed.

Image: Microsoft

Speech

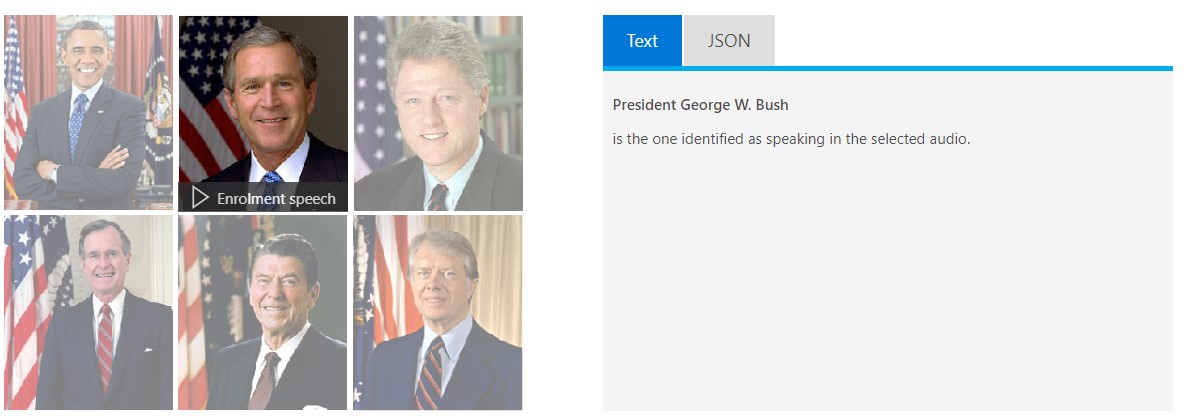

The Speech APIs allow you to integrate voice capabilities with your existing application or service. If you’re building a chatbot, you might even want to let your chatbot speak to users. Some of the key features of this include:

- Speech to text

- Speaker recognition

- Text to Speech

- Speech translation

It’s a little hard to relay this in a blog, but in the screen shot below, you can see that the API has identified with high confidence, the audio file that was played, was a recording by George W Bush.

Image: Microsoft

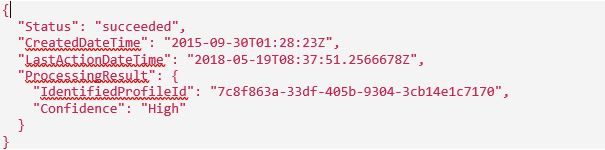

The APIs return JSON which contains additional metadata that you can also see here:

Source: Microsoft

Knowledge

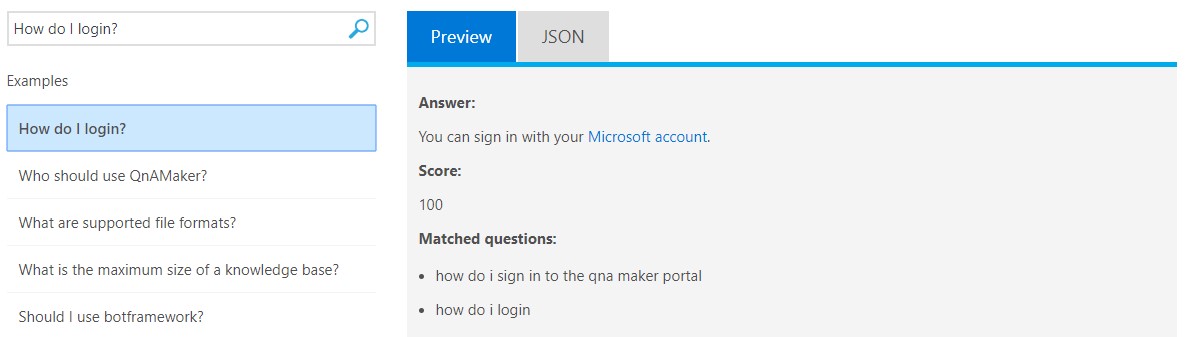

In an age where the volume of user generated data shows no signs of decreasing, technologies like artificial intelligence are well suited to processing and understanding such datasets. This is one area where the Knowledge Services API can help. At the time of writing there are two services:

- QnA Maker

- Custom Decision

The QnA maker lets you extract questions and answers from a data source of your choice; it’s ideal if you have an existing knowledge base or content and want to autogenerate a Q and A resource. Here you can see the question “How do I login has been asked”, the relevant answer has been returned with an accompanying confidence score of 100.

Source: Microsoft

When you’ve ingested your content, your newly created QnA service can be tweaked through an easy to use web interface which gives you total control over the pairs of questions and answers that you offer to your users. If you’re developing chatbots, the QnA maker can also be integrated with the Bot Framework to give your chatbot “out of the box” QnA features.

Accenture used the QnA maker to rapidly build a corpus that powered an HR chatbot for over 100,000 users!

Search

Powered by Bing, the Search services offer a wide range of endpoints that let you add services to help you find exactly what you’re looking for, and at scale. Whether it be searching web pages, images, videos or news results, the Search capabilities that ship with Cognitive Services provide many capabilities, including but not limited to:

- Searching for websites

- Custom Search

- Video topic and trend identification search

- Knowledge acquisition from images

- Identification of similar images

- Named entity recognition

- News search, trending news identification search

- …and much more

Being able to infer additional meta data from user images, or identifying news trends can give you actionable insights that help you make more informed decisions. For example, if a news story is trending, digital marketers may want to increase the ad-spend, or maybe you’re processing vast quantities of structured and non-structured data and want to identify entities within streams of text, the Search services can help you achieve all this.

Language

The amount of user generated content is staggering, especially on social media. Quite often this contains human language and valuable insights can be inferred from such content. Being able to understand human language is a well-researched area of computer science and the Language services that ship with Cognitive Services help you build solutions that understand the underlying meaning or intent behind what’s being communicated.

It’s split up into 5 main capabilities:

- Text Analytics

- Bing Spell Check

- Language Understanding

- Translator Text

- Content Moderation

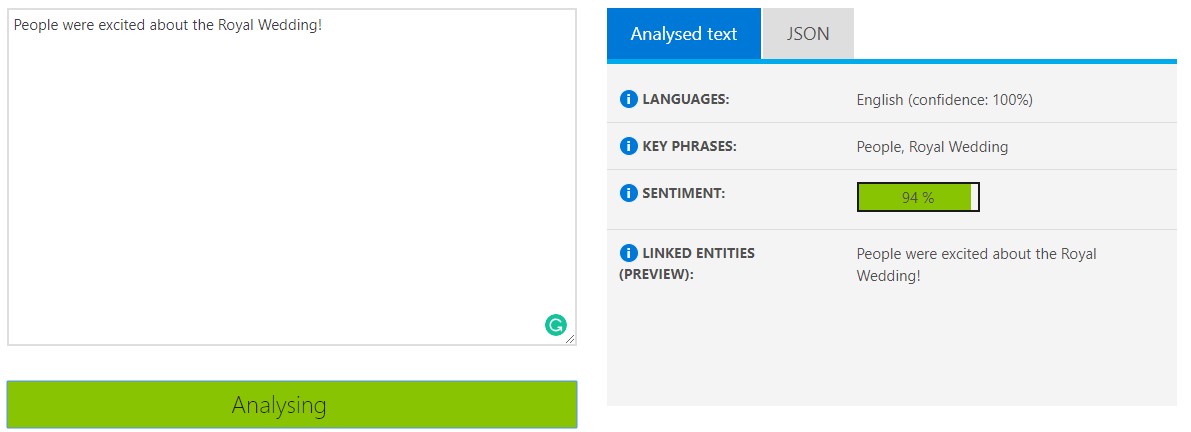

Each capability has its own sub capabilities, for example, the Text Analytics capability lets you identify Key Phrases, Entities and the underlying Sentiment within a given stream of text. I wish this was available during my Masters a few years back when I built an API that, amongst other things, performed sentiment analysis of Twitter data, it would have saved me a lot of work!

Source: Microsoft

Recent updates to the Cognitive Services Ecosystem in 2018

At Build, Microsoft introduced additional services to some of the existing capabilities within the Cognitive Services ecosystem. Some of the key updates include:

Computer Vision

- Improved OCR model

- Build and create your own customised image classifiers

- Location recognition of objects in images

- Machine assisted content moderation and a new human review tool

- Video indexer – you can now extract insights from videos

Language and Text Analytics

- Identification of Entities and linking from raw text. This lets you recognise well known items (or entities) within a string of text such as a place or location, person or organisation and will link to more relevant information on the internet

Cognitive Services Labs

For those early adopters out there, Cognitive Services Labs have just been released. This gives developers and the tech community an insight into the cutting-edge technologies that are being developed on the Cognitive Services platform. If you don’t need market-ready technology, it’s a good place to get a feel for what’s on the horizon.

Image: Microsoft

At the time of writing, there are 12 projects in that range from Vision and gesture based systems (Project Gesture) to Search (Project Event Tracking).

Summary

In this blog post we’ve looked at the Cognitive Services APIs at a high level and explored some of their capabilities and use cases. We’ve also looked at some of the recent updates to these APIs that were announced at Build 2018 and how you can experiment with up and coming technology through the Labs portal.

In the next blog post, we’ll look at the Language capabilities and Text Analytics API, some of its features and how you can use them.

Are you working with any of the Cognitive Services APIs? How are you using them?

Related article: read our Demystifying Azure PaaS post and see how platform-based services leverage the latest technologies in the cloud.

This article is the first in a four part series. Click through to the series here:

For technical advice or if you wish to discuss Azure options and costs, please call our Managed Services team on +44 (0)1364 654200.

Contact Grey Matter

If you have any questions or want some extra information, complete the form below and one of the team will be in touch ASAP. If you have a specific use case, please let us know and we'll help you find the right solution faster.

By submitting this form you are agreeing to our Privacy Policy and Website Terms of Use.

Jamie Maguire

http://www.jamiemaguire.netSoftware Architect, Consultant, Developer, and Microsoft AI MVP. 15+ years’ experience architecting and building solutions using the .NET stack. Into tech, web, code, AI, machine learning, business and start-ups.

Related News

Intel oneAPI 2024.1 A Milestone Release

What’s new in Intel oneAPI 2024.1 The 2024.1 release of Intel® Software Development Tools marks a major milestone for developers AND the entire software industry: the Intel® oneAPI DPC++/C++ Compiler has become the first compiler to fully support the SYCL...

ISV Partner Day Shortlisted for CRN Sales & Marketing Award

ISV Partner Day has been shortlisted for "Best Customer Event" at the CRN Sales & Marketing Awards

Microsoft 365 and Azure Security Tools: Microsoft Intune

In the second video in our series of short videos discussing Microsoft 365 and Azure security tools and concepts, our Microsoft experts cover off all you need to know about Microsoft Intune! Intune is a robust cloud-based solution to safeguard...

Women in Tech: A New Era | Roundtable

Fri 21 June 2024 5:00 pm - 11:30 pm BST

Get ready to shake it off (and network like nobody’s watching) because we’re hosting an exciting exclusive Women in Tech event with ESET that you won’t want to miss out on. Join us and share feedback, experiences and insights with...