Microsoft Cognitive Services Face API

Blog|by Jamie Maguire|9 August 2018

Introduction

In the last couple of Cognitive Services posts, we focussed on text analytics and how we can process natural language using LUIS. In this blog post, we focus on the Microsoft Cognitive Services Face API. Specifically, we look at:

• what you can do with the Face API

• run through examples of it

• discuss some of the applications for the API

• see how you can easily integrate the API with your existing products and services

What’s possible with the Face API?

Initially released in 2016, the Face API belongs to the Microsoft Cognitive Services set of AI cloud services and gives you access to some of the most advanced face recognition algorithms that are currently on the market. The main features of the Face API are centred around face detection with attributes, face recognition and emotional recognition which allow you to:

• Detect human faces

• Find similar faces

• Group faces based on similarity

• Identify previously tagged people using their faces

• Identify the underlying emotion for each face in an image (anger, content, fear, happiness etc.)

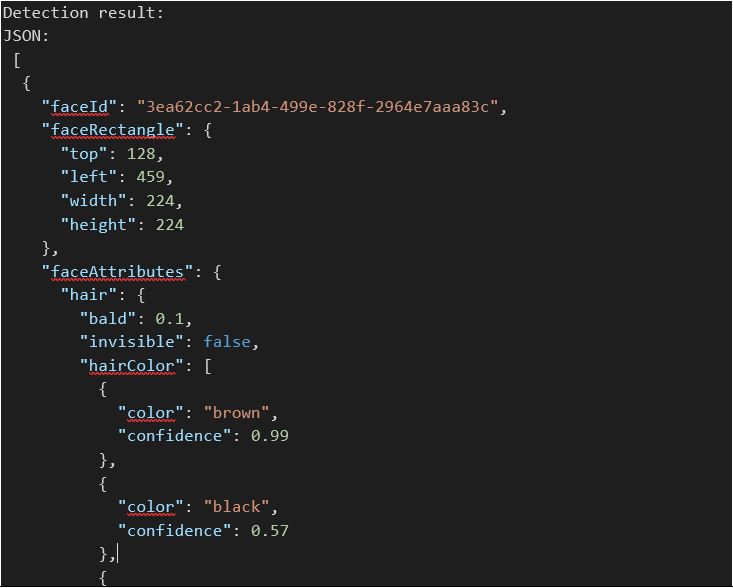

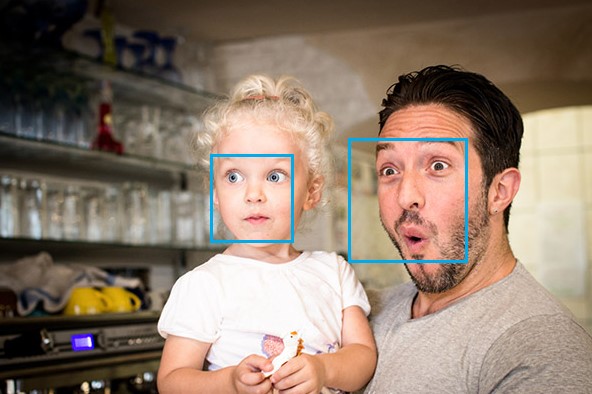

Face Detection

This feature of the API allows you to detect “face rectangles” which are accompanied by rich sets of attributes that include but are not limited to: Age, Emotion, Gender, Pose, Smile, and Facial Hair. In this image, we can see the API has identified the face and highlighted it:

Image: Microsoft

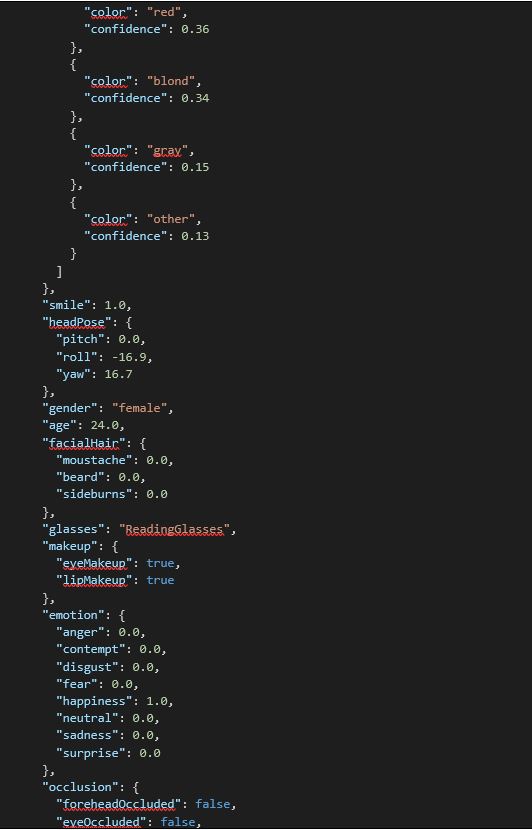

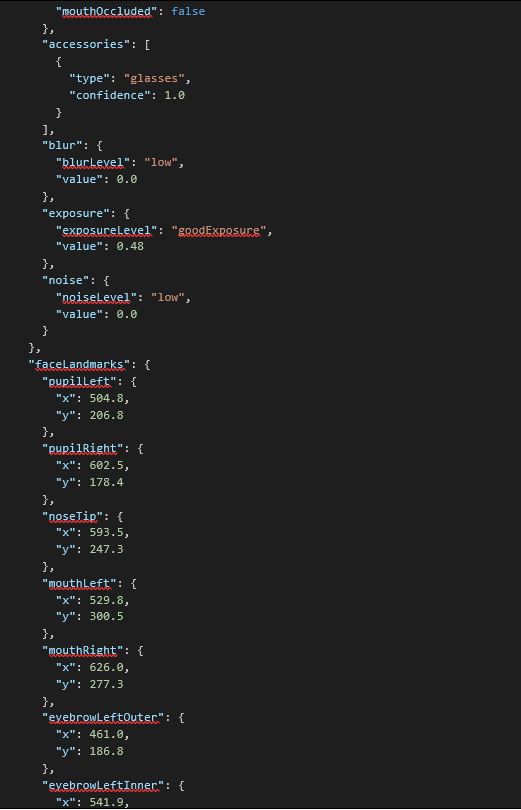

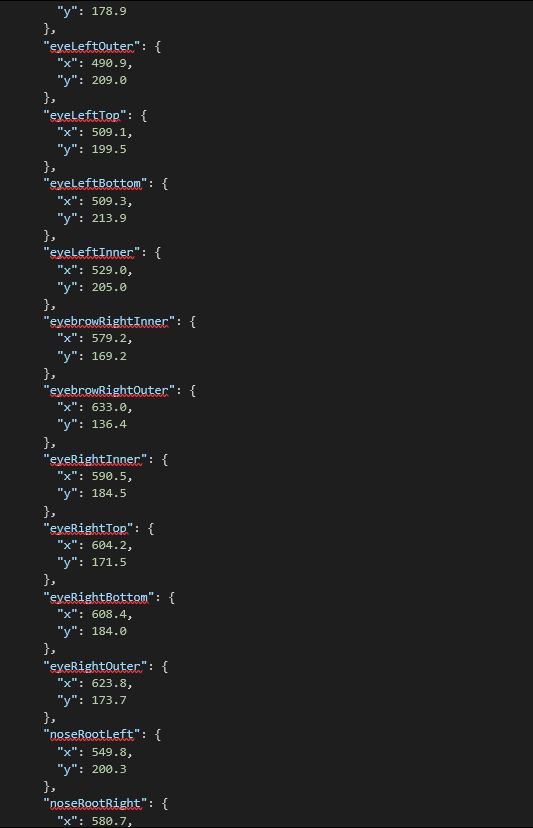

The following attributes are returned by the Face API for this image:

I won’t go into every single attribute in the about JSON, however some of the key attributes the API has correctly identified include:

- Gender

- Hair colour

- Makeup present

- Glasses present

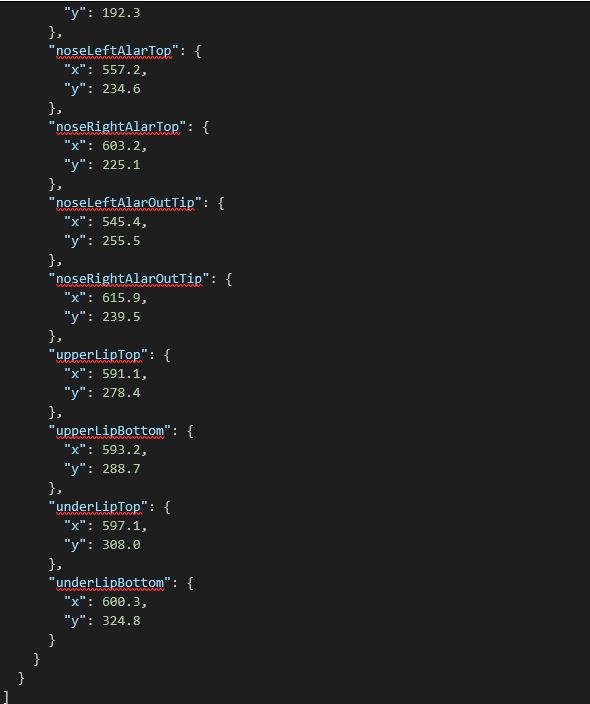

You might be wondering what a lot of the other attributes mean and where they’ve been identified in the image. For the image in this example, you can see where each of these are positioned:

Image: Microsoft

You can find a glossary of each attribute here. One thing to point out that’s interesting is the API has also attempted to identify the underlying emotion the lady in the image is expressing which brings us onto Emotional Recognition feature of the Face API.

Emotional Recognition

Originally, the Emotion Detection feature of the Face API was shipped as its own independent API; Microsoft has decided to fold this into the Face API (which makes sense in my opinion!). With emotional responses being almost universal across cultures, being able to identify the underlying emotion through facial expressions can also be of interest.

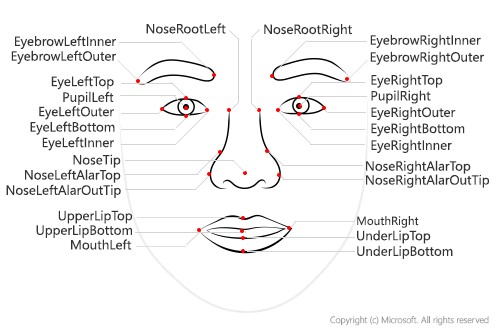

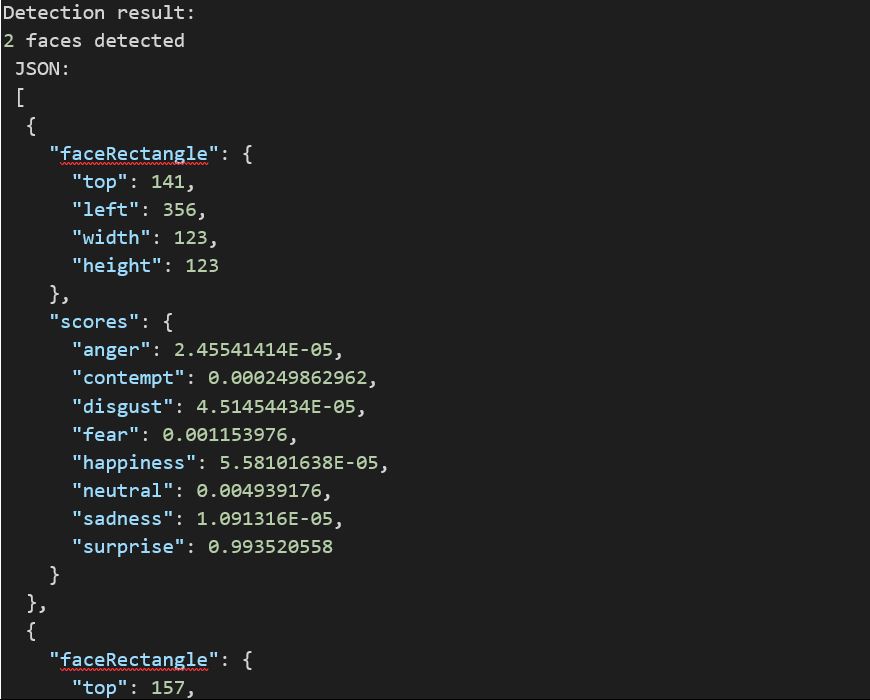

When you pass an image to the Emotional Recognition endpoint, it will identify any faces that exist in the image and return a JSON response with an accompanying set of confidence scores (where value of 0 = low confidence and 1 = high confidence) and the emotion it has detected. You can see an example of this in action below:

Image: Microsoft

Associated JSON response for this image when suppled to the endpoint:

The key attributes in this example to look for are:

- 2 faces detected

- surprise: 0.993520558

- surprise”: 0.792916059

I think you’ll agree, there are two surprised looking faces in this image!

Note: The original Emotion Detection API is getting deprecated in 2019. You can find out more about information about that here.

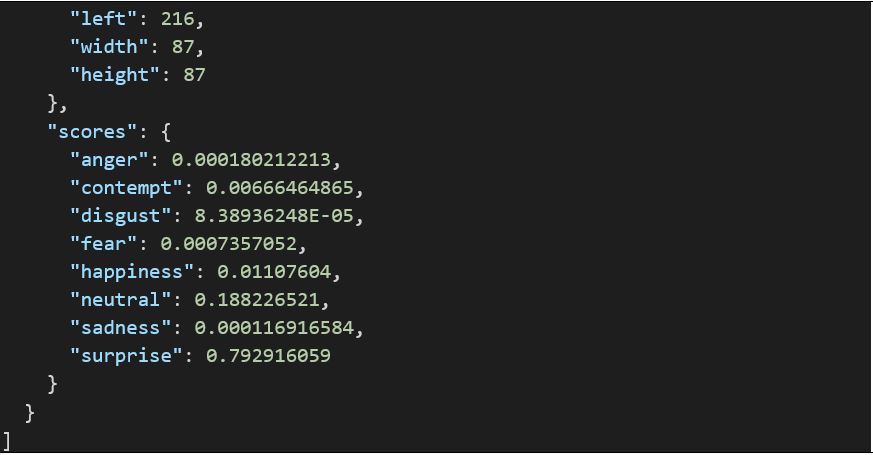

Face Verification

In a nutshell, this endpoint in the Face API is all about determining what the likelihood is that two faces belong to the same person. Like the others, it returns a confidence that ranges between 0 and 1. In this image for example, you can see we’re dealing with the same person and the API has arrived at a score of 0.7349 (or 74%):

Image: Microsoft

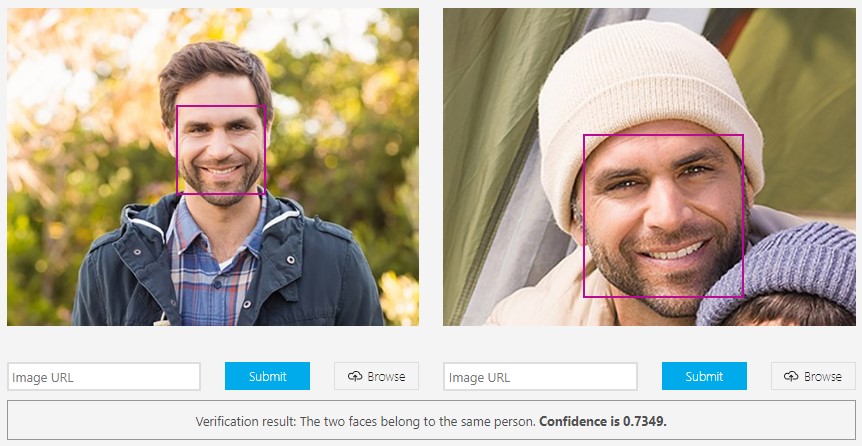

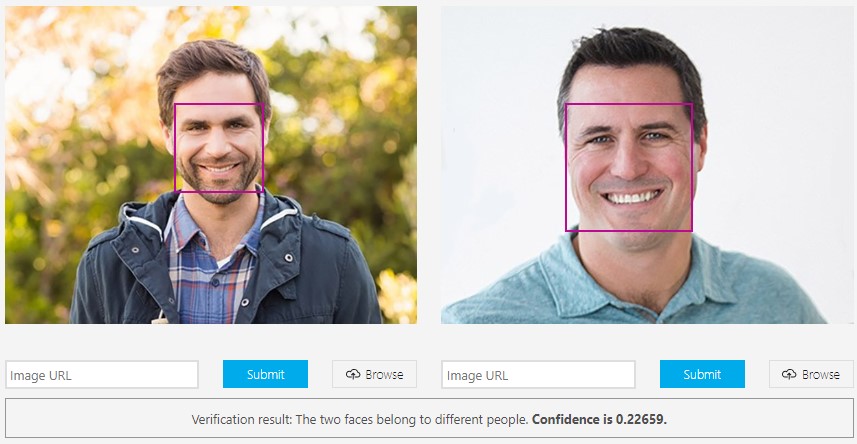

Whereas, if we supply a different image, that’s clearly unrelated, the score drops dramatically to 0.22659 (or 23%):

Image: Microsoft

These are the main endpoints that ship with the Face API, you can find a comprehensive list of some of the more nuanced endpoints, such as “grouping” here.

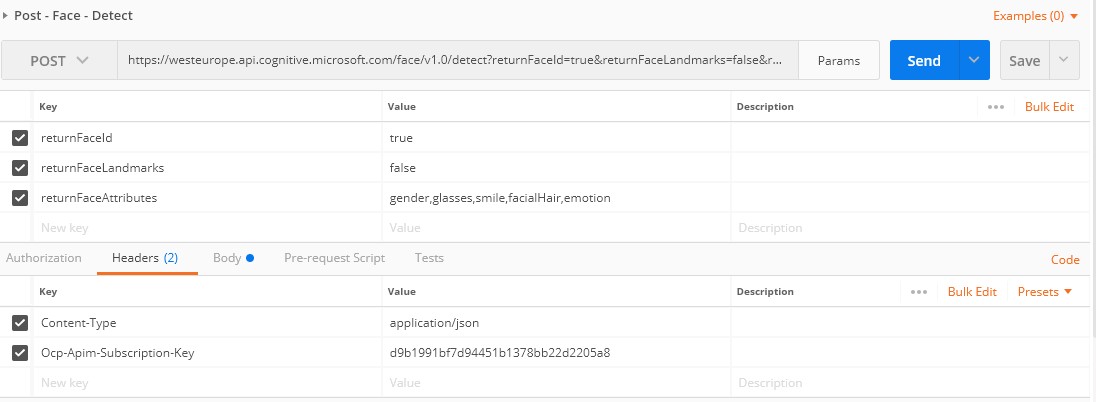

Easy Integration

As with all APIs in the Cognitive Services ecosystem, you can consume the Face API endpoints in a programming language of your choice. When exploring with new APIs in the Cognitive Services stack, I tend to use Postman to get a quick understanding of the requests you need to make – and the parameters you need to supply.

With Postman, it’s easy to construct requests, and in the image below, I’ve created a request to consume the Face Detection endpoint. Here you can see I’m supplying the relevant parameters, headers, as well as some JSON in the request body that contains a link to my Twitter profile image:

You’ll notice I’ve supplied the returnFaceAttributes parameter and included:

- Gender

- Glasses

- Smile

- Facial hair

- Emotion

When we execute the request, the Face API returns the following JSON and you can see it’s identified male (thankfully!), facial hair, the type of glasses and neutral emotion. I’d say it’s pretty accurate!

This is just one example and you can find more information regarding additional REST endpoints here on the Cognitive Services documentation site.

Visual Studio Connected Services

If you’re using Visual Studio, you also have the option of tapping into them using Connected Services in Visual Studio. This add-on for Visual Studio makes it incredibly easy to leverage the APIs using a CLR language in your VS Solution in just a few lines of code! You’ll need an Azure subscription to use it but you get a free account to trial it out. You can look at an end-to-end code example here if it’s something you’re interested in.

Further thoughts and applications for this API

The Face API features too many endpoints to discuss in one blog post but I can see lots of applications for it, from security to marketing, or possibly even virtual counselling. You can find sample applications on the Microsoft site that you can play with and extend which will give you some ideas.

The API ships with functionality that allows you to effectively create a datastore of existing faces and verify if incoming faces belong to your existing datastore. A feature like this could be applied to use cases where security is paramount, in fact, it’s just what Uber, the on-demand car sharing firm, has done.

Uber and the Face API

By leveraging the Face API, Uber were able to mitigate the risk of fraud and ensure drivers and riders could travel with peace of mind. Passengers understandably don’t want to get into a taxi where the driver doesn’t match the profile in the Uber app and with one of Uber’s key philosophies being “no strangers” it was a key business challenge to solve.

A solution was built in less than 1 month using the Face API to help ensure that drivers using the Uber app matched the account stored in Uber’s database, and as the API does all the heavy lifting in terms face recognition, Uber saved MONTHS of development effort as well!

Summary

In this blog post, we’ve looked at the Face API that belongs to the Microsoft Cognitive Services stable of products, we explored some of the key features of the API and how easy it is to consume the API endpoint and integrate this with your existing products and services. Have you used the Face API in any of your applications?

Catch up on previous Cognitive Services posts on CodeMatters:

Microsoft Cognitive Services: an introduction

Microsoft Cognitive Services: Text Analytics API

Language Understanding Intelligence Service – LUIS

The Grey Matter Managed Services team are experts at managing Azure-related projects, including Cognitive Services. You can contact them on +44 (0)1364 654100 to discuss Azure and Visual Studio options and costs, or if you require technical advice.

Contact Grey Matter

If you have any questions or want some extra information, complete the form below and one of the team will be in touch ASAP. If you have a specific use case, please let us know and we'll help you find the right solution faster.

By submitting this form you are agreeing to our Privacy Policy and Website Terms of Use.

Jamie Maguire

http://www.jamiemaguire.netSoftware Architect, Consultant, Developer, and Microsoft AI MVP. 15+ years’ experience architecting and building solutions using the .NET stack. Into tech, web, code, AI, machine learning, business and start-ups.

Related News

Managing change in your business: Preparing for Generative AI in the workplace

2024 presents unique challenges for businesses across all industries, as business leaders prepare to implement generative AI.

Intel oneAPI 2024.1 A Milestone Release

What’s new in Intel oneAPI 2024.1 The 2024.1 release of Intel® Software Development Tools marks a major milestone for developers AND the entire software industry: the Intel® oneAPI DPC++/C++ Compiler has become the first compiler to fully support the SYCL...

ISV Partner Day Shortlisted for CRN Sales & Marketing Award

ISV Partner Day has been shortlisted for "Best Customer Event" at the CRN Sales & Marketing Awards

Microsoft 365 and Azure Security Tools: Microsoft Intune

In the second video in our series of short videos discussing Microsoft 365 and Azure security tools and concepts, our Microsoft experts cover off all you need to know about Microsoft Intune! Intune is a robust cloud-based solution to safeguard...